How to Build a ChatGPT App (April 2026)

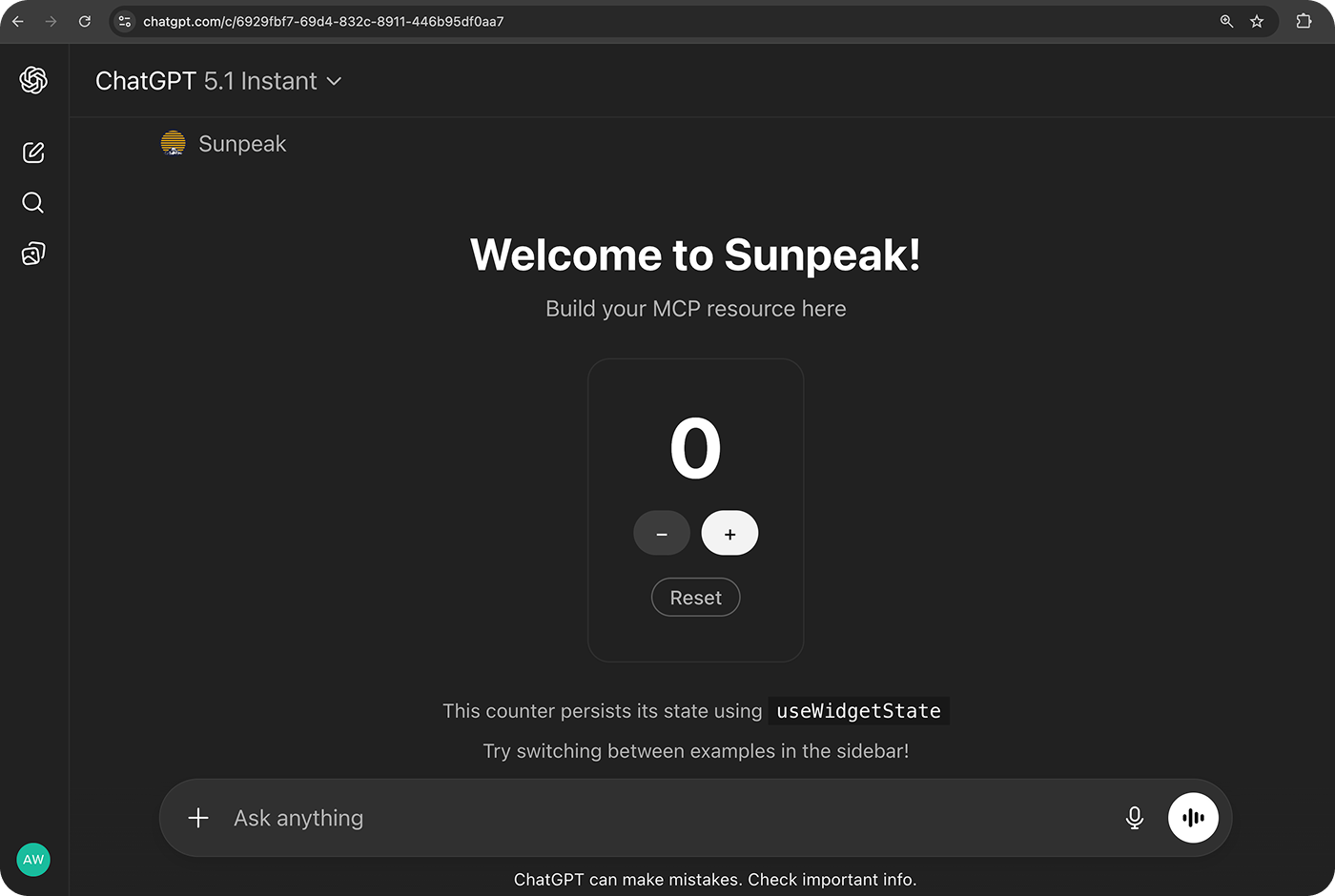

A simple counter app built and deployed with sunpeak.

TL;DR: ChatGPT Apps are MCP Apps that run interactive UIs inside ChatGPT conversations. You build an MCP server with tools and UI resources, and ChatGPT renders your UI in a sandboxed iframe. The fastest way to start: npx sunpeak new sunpeak-app && cd sunpeak-app && pnpm dev.

What Is a ChatGPT App?

A ChatGPT App is an interactive application that runs inside a ChatGPT conversation. When a user triggers your app (by @-mentioning it or selecting it from the tools menu), ChatGPT calls your MCP server’s tools and renders your UI directly in the chat.

ChatGPT Apps are built on the MCP Apps protocol, the first official extension to the Model Context Protocol. MCP Apps went GA in January 2026 as an open standard co-developed by Anthropic and OpenAI. The protocol extends MCP with a standard for interactive UIs, so the same app code works in ChatGPT, Claude (via Connectors), Claude Desktop, VS Code GitHub Copilot, Goose, Postman, and MCPJam. You can check the full list on the MCP client extension matrix.

ChatGPT Apps are available to all logged-in ChatGPT users on Free, Go, Plus, Pro, Business, Enterprise, and Edu plans (currently outside the EEA, Switzerland, and the UK).

Here’s the architecture at a high level:

- Your MCP server declares tools (functions the AI can call) and resources (UI templates using the

ui://URI scheme). - ChatGPT (the host) calls your tools when the AI decides to use them, then fetches and renders the associated UI resource.

- Your UI runs in a sandboxed iframe. It communicates with ChatGPT via

postMessageusing JSON-RPC to receive tool data and call other tools.

This is the same architecture behind Claude Connectors and MCP Apps on every other supported host. Build a ChatGPT App on the MCP Apps protocol and you get multi-host support for free.

Official Resources

Here’s what’s available from OpenAI and the MCP community:

- OpenAI Apps SDK Documentation - OpenAI’s official docs covering app concepts, UX guidelines, building, deployment, and submission.

- MCP Apps in ChatGPT - OpenAI’s guide to MCP Apps compatibility in ChatGPT, including how MCP Apps and the Apps SDK relate.

- Build your MCP server - OpenAI’s walkthrough for building an MCP server that works as a ChatGPT App.

- App submission guidelines - Requirements and review criteria for the ChatGPT App Directory.

- openai/apps-sdk-ui - OpenAI’s open-source React component library with Radix primitives, design tokens, and Tailwind 4 integration.

- MCP Apps Extension Specification - The official MCP spec for interactive UIs across all MCP hosts.

- ext-apps SDK and Examples - The open-source SDK (v1.1.2), reference implementation, and 15+ example apps (maps, 3D viewers, PDF readers, dashboards, and starter templates for React, Vue, Svelte, Preact, Solid, and vanilla JS).

- MCP Apps Blog Post - The official MCP blog post announcing the MCP Apps extension.

OpenAI’s own Apps SDK and the MCP Apps standard share the same foundation. OpenAI contributed elements of the Apps SDK to the MCP Apps spec, so building on MCP Apps gives you compatibility with ChatGPT and every other MCP Apps host. The MCP Apps in ChatGPT guide recommends building with MCP Apps standard keys and bridge by default, and only using window.openai when you need ChatGPT-specific features like file uploads, instant checkout, or host modals.

Getting Started

1. Install and Scaffold

Make sure you have Node.js 20+ installed. Then create a new project:

npx sunpeak new sunpeak-app

cd sunpeak-appThis generates a project with a working MCP server, tool handlers, Zod schemas, and a sample UI resource. The scaffolded code follows the MCP Apps spec, so it works in ChatGPT, Claude, and other MCP Apps hosts out of the box.

If you already have an MCP server and just want to test it, you can skip scaffolding and run npx sunpeak inspect --server <url> to open the inspector against your existing server.

2. Start the Dev Server

pnpm devThis starts two servers: an MCP App inspector at localhost:3000 that replicates the ChatGPT runtime, and an MCP server at localhost:8000 with hot reload. Every time you save a file, the inspector updates automatically.

The inspector lets you trigger tools, see your UI render, switch between display modes (inline, PiP, fullscreen), toggle between ChatGPT and Claude host themes, and test the full app lifecycle without needing a ChatGPT account.

3. Connect to the Real ChatGPT

When you’re ready to test in the real ChatGPT, create a tunnel so ChatGPT can reach your local server:

ngrok http 8000Then add the ngrok forwarding URL with /mcp path in ChatGPT:

- Go to Settings > Apps & Connectors > Advanced settings and enable developer mode.

- Go to Settings > Connectors > Create.

- Enter a connector name, description, and the ngrok URL (e.g.

https://abc123.ngrok.app/mcp). - Open a new chat, click +, select your connector, and prompt the model to call your tools.

When you update your tools, click Refresh in Settings > Connectors to sync the metadata.

This requires a ChatGPT account with developer mode enabled. For more on developing without a paid account, see How to Build a ChatGPT App Without a Paid Account. For a full walkthrough of connecting to ChatGPT, see OpenAI’s Connect from ChatGPT guide.

How Your App’s UI Works

Your app’s UI is a regular web page (HTML, CSS, JavaScript) that ChatGPT renders in a sandboxed iframe. The key difference from a normal web page: your UI communicates with ChatGPT through a postMessage bridge using JSON-RPC instead of making direct API calls.

When ChatGPT calls one of your tools, your MCP server returns data along with a reference to a ui:// resource (declared in the tool’s _meta.ui.resourceUri field). ChatGPT can preload the resource before the tool finishes, so your UI can start rendering while data is still streaming. Once the tool completes, ChatGPT pushes the result to your UI. Your UI can then call other tools back through the host, which means your app can be interactive across multiple turns of conversation.

The app runs in a sandbox that prevents access to the parent window’s DOM, cookies, or local storage. You can specify a content security policy (CSP) in _meta.ui.csp to control which external origins your app can load resources from, and request additional capabilities like microphone or camera access via _meta.ui.permissions.

OpenAI publishes apps-sdk-ui, an open-source component library with Radix primitives, Tailwind 4 integration, and design tokens that match ChatGPT’s native look. It’s optional but useful if you want your app to feel native in ChatGPT. For cross-host styling, see MCP App Styling with Host CSS Variables.

You don’t need to manage the postMessage bridge yourself. The MCP Apps SDK provides an App class and utilities that handle the communication layer. Frameworks like sunpeak build on top of this with React hooks like useToolData that give your component typed access to tool output without writing any bridge code.

Display Modes

ChatGPT Apps support three display modes that control how your UI appears:

- Inline renders your app’s UI within the conversation flow, like a rich message.

- Picture-in-picture (PiP) shows your app in a floating panel that persists as the user scrolls through the conversation.

- Fullscreen takes over the main chat area, giving your app maximum screen space.

You set the display mode in your tool definition. For a deeper look at each mode and when to use it, see the ChatGPT App Display Mode Reference. Apps can also request display mode transitions at runtime, so your UI can start inline and expand to fullscreen when the user needs more space. If you’re building for both ChatGPT and Claude, be aware that Claude Connectors handle display modes slightly differently, so testing across hosts matters.

Testing Your App

Testing in the real ChatGPT on every code change is slow: you’d need to refresh, re-trigger the tool, and wait for the AI to respond each time. That adds up to a 4-click manual refresh cycle per code change, per host. A local inspector gives you instant feedback with hot reload.

For automated testing, sunpeak includes a built-in testing framework. Run pnpm test to execute both unit and e2e tests, so you can validate your app in CI/CD without needing a live ChatGPT connection. Use pnpm test:visual for visual regression tests. See the complete guide to testing ChatGPT Apps for the full setup, or MCP App CI/CD with GitHub Actions for pipeline configuration.

OpenAI also provides a test integration step in their deployment docs, which covers verifying your app works correctly after connecting to ChatGPT.

Submitting to the App Directory

The ChatGPT App Directory is live and accepting submissions. To submit your app:

- Verify your account. Complete identity verification (individual or business) on the OpenAI Developer Platform. You need the Owner role in your organization.

- Deploy your MCP server to a publicly accessible URL with a proper content security policy. sunpeak apps can be deployed with

pnpm build && pnpm startbehind any hosting provider, or see the MCP App deployment guide for options including Cloudflare, Vercel, and others. - Prepare your submission materials: app name, logo, description, screenshots, a privacy policy URL, test prompts with expected responses, and localization details.

- Submit through the Developer Platform. You’ll receive a Case ID via email to track your review. Only one version of each app can be published or under review at a time.

OpenAI runs both automated scans and manual reviews. Common rejection reasons include server connectivity issues, incorrect test case results, undisclosed user data, and mismatched tool annotations. The full requirements are in OpenAI’s app submission guidelines. When approved, your app appears in the directory and OpenAI automatically creates a Codex plugin for distribution too.

Building for Multiple Hosts

Because ChatGPT Apps use the MCP Apps protocol, your app works wherever MCP Apps are supported. As of April 2026, that’s seven hosts: ChatGPT, Claude, Claude Desktop, VS Code GitHub Copilot, Goose, Postman, and MCPJam.

The main things to watch when targeting multiple hosts are display mode differences and host-specific CSS variables. For a practical walkthrough, see Building One MCP App for ChatGPT and Claude. You can also read How to Build a Claude Connector for details on how the same MCP server works on the Claude side.

ChatGPT offers optional extensions like file uploads, instant checkout, and host modals through window.openai. These should feature-detect and degrade gracefully in other hosts. The MCP Apps in ChatGPT guide covers what’s ChatGPT-specific versus what’s part of the open standard.

sunpeak’s inspector lets you switch between ChatGPT and Claude host themes during development, so you can catch cross-host issues before deploying. For styling, see MCP App Styling with Host CSS Variables to make your UI adapt to each host’s design tokens.

Get Started

npx sunpeak new

Further Reading

- ChatGPT App Tutorial - a hands-on walkthrough that builds a working app step by step.

- MCP App Tutorial - build an MCP App from scratch with tools and resources.

- Building One MCP App for ChatGPT and Claude - how to target multiple hosts from one codebase.

- ChatGPT App Display Mode Reference - documents inline, fullscreen, and PiP modes.

- The Complete Guide to Testing ChatGPT Apps - covers unit tests, end-to-end tests, and CI/CD.

- How to Build a Claude Connector - build the same app for Claude using Connectors.

- MCP App CI/CD with GitHub Actions - automate your ChatGPT App testing pipeline.

- MCP Apps Extension Specification - the open standard that powers ChatGPT Apps, Claude Connectors, and more.

- sunpeak quickstart - official docs walkthrough.

Frequently Asked Questions

What is the fastest way to build a ChatGPT App?

Run "npx sunpeak new" to scaffold a project, then run "pnpm dev" to get a local inspector at localhost:3000 and an MCP server at localhost:8000 with hot reload. You can have a working ChatGPT App running locally in under two minutes.

Do I need a paid ChatGPT subscription to develop ChatGPT Apps?

No. You can build and test ChatGPT Apps locally without any ChatGPT account using a local MCP Apps inspector like sunpeak's. A ChatGPT account with developer mode enabled is only needed when you want to connect your app to the real ChatGPT for live testing. ChatGPT Apps are available to users on Free, Go, Plus, Pro, Business, Enterprise, and Edu plans.

What are ChatGPT Apps built on?

ChatGPT Apps are built on the MCP Apps protocol, the first official extension to the Model Context Protocol (MCP). Your app is an MCP server that declares tools (actions the AI can call) and resources (UI views rendered in sandboxed iframes). ChatGPT also supports its own Apps SDK, and the two approaches share the same underlying MCP Apps standard.

How do I connect my ChatGPT App to the real ChatGPT for testing?

Run your MCP server locally, create a tunnel with ngrok (ngrok http 8000), then add the ngrok URL with /mcp path in ChatGPT. Go to Settings > Apps & Connectors > Advanced settings to enable developer mode, then go to Settings > Connectors > Create and enter your server URL.

What display modes do ChatGPT Apps support?

ChatGPT Apps support three display modes: inline (renders within the conversation flow), picture-in-picture or PiP (a floating panel), and fullscreen (takes over the chat area). You set the display mode in your tool definition, and apps can also request display mode transitions at runtime.

How do I submit my ChatGPT App to the App Directory?

You need a verified OpenAI developer account with the Owner role in your organization. Submit through the OpenAI Developer Platform with your publicly accessible MCP server URL, app metadata (name, description, logo, screenshots), test prompts with expected responses, a privacy policy URL, and CSP configuration. Apps go through automated scans and manual review before appearing in the directory.

Can I build one app that works in both ChatGPT and Claude?

Yes. Because ChatGPT Apps use the MCP Apps protocol, the same MCP server and UI resources work in any host that supports MCP Apps. As of April 2026, that includes ChatGPT, Claude, Claude Desktop, VS Code GitHub Copilot, Goose, Postman, and MCPJam. sunpeak makes cross-host development easier by letting you test in multiple host inspectors from one codebase.

What is sunpeak and why should I use it for ChatGPT App development?

sunpeak is an open-source MCP App framework that works as a ChatGPT App framework and Claude Connector framework. It gives you a local inspector that replicates the ChatGPT and Claude runtimes, a hot-reloading MCP server, pre-built UI components, a built-in testing framework, and CLI tools for building and deploying apps. It saves you from needing a paid ChatGPT account during development and protects you from the manual refresh cycle of testing in the real ChatGPT.