The Complete Guide to Testing ChatGPT Apps and MCP Apps (April 2026)

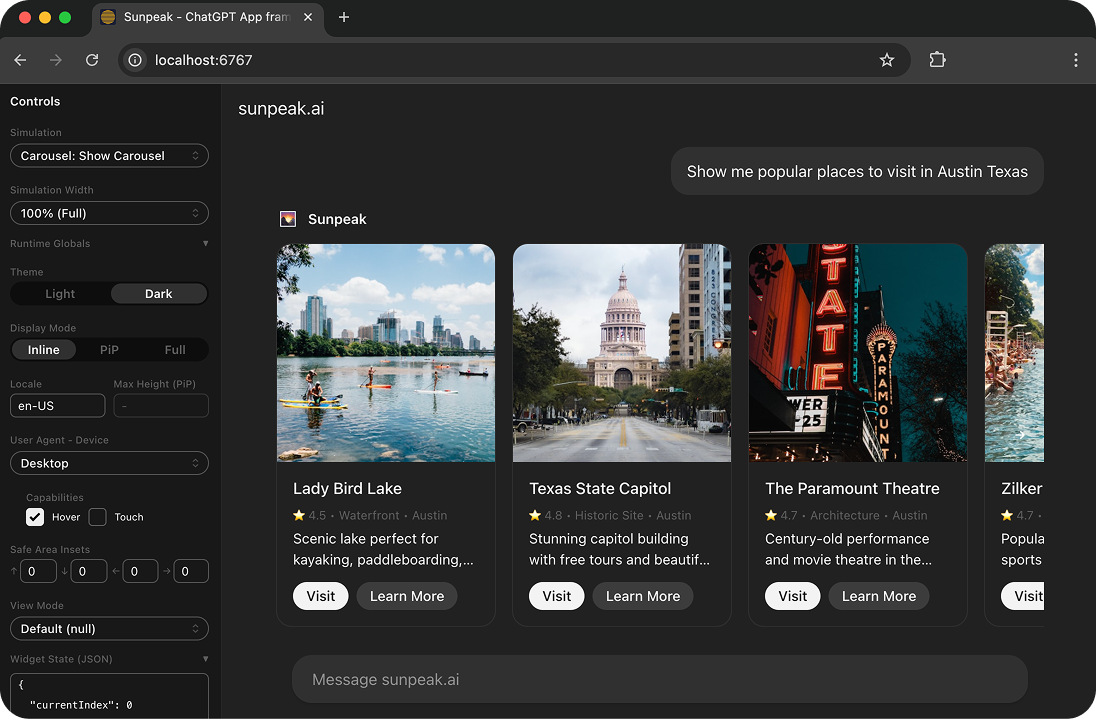

The sunpeak ChatGPT App inspector with testing capabilities.

[Updated 2026-04-06] Testing ChatGPT Apps and MCP Apps is brutal. Your App needs to work properly with all kinds of states: it needs to account for host runtime state, host theme state, MCP server state, backend state, and now it needs to work across ChatGPT and Claude (each with its own UI chrome, color palette, rendering behavior, and runtime APIs).

Without proper testing infrastructure, you’re either deploying blind or burning credits on every test, paying for subscriptions your whole team barely uses, and wasting a bunch of time testing manually.

TL;DR: Use sunpeak’s built-in testing framework: pnpm test runs both unit and e2e tests. Use pnpm test:unit or pnpm test:e2e to run them separately, pnpm test:visual for visual regression tests, pnpm test:live for live host tests, or pnpm test:eval for multi-model evals that test tool calling across GPT-4o, Claude, Gemini, and other LLMs. The inspector fixture from sunpeak/test calls your tools, renders them in simulated ChatGPT and Claude runtimes, and gives you a frame locator for assertions. Tests run against both hosts automatically. No paid accounts. No AI credits. Define states in simulation files and run everything automatically.

This guide covers everything you need to test ChatGPT Apps with confidence.

Why Testing ChatGPT Apps is Different

ChatGPT Apps run in a specialized runtime environment. Your React components don’t just render in a browser. They render inside the ChatGPT App runtime with:

- Host frontend state - Inline, in picture-in-picture, and fullscreen display modes, light or dark theme, etc.

- Tool invocations - The AI host calls your app’s tools with specific inputs

- Backend state - Various possible states for users and sessions in your database

- App state - Persistent state that survives across invocations

- Multiple hosts - ChatGPT and Claude each have their own UI chrome, color palette, layout conventions, and rendering behavior

Testing each combination manually isn’t feasible, the combinatorics are brutal.

The Cross-Host Problem

MCP Apps run on ChatGPT, Claude, and other hosts. Each host renders your app differently. Your app needs to look right in both.

Testing manually against the real hosts means:

- A ChatGPT Plus subscription ($20/mo per team member)

- A Claude Pro subscription ($20/mo per team member)

- Burning AI credits every time you test, because the model processes your tool call each time you want to see your UI

- Waiting for the model to respond before you can see your component render

- No way to run these tests in CI/CD, since you can’t automate real ChatGPT or Claude interactions

During active development, you might test dozens of times a day. Across a team of five, that’s $200/month in subscriptions alone, plus whatever credits you burn. And you still can’t run automated regression tests.

sunpeak’s inspector ships both a ChatGPT host and a Claude host built-in. Switch between them with the host dropdown in the sidebar, or pass ?host=claude in the URL. Your automated tests run against both hosts on every push, on your CI/CD runners, with zero external dependencies. No paid accounts, no API keys, no credits.

Setting Up Your Testing Environment

If you’re using the sunpeak ChatGPT App framework, testing is pre-configured. Start with:

npx sunpeak new sunpeak-app

cd sunpeak-appYour project includes:

- E2E tests powered by Playwright with the

inspectorfixture fromsunpeak/test - Unit tests powered by Vitest with happy-dom

- Simulation files in

tests/simulations/for deterministic states - Eval scaffolding in

tests/evals/for multi-model tool calling tests

The Playwright config is a one-liner:

// playwright.config.ts

import { defineConfig } from 'sunpeak/test/config';

export default defineConfig();This handles dev server startup, port allocation, and multi-host project setup automatically.

Unit Testing with Vitest

Unit tests validate individual components in isolation. Run them with:

pnpm test:unitCreate tests alongside your components in src/resources with the .test.tsx extension:

import { render, screen } from '@testing-library/react';

import { Counter } from '../src/resources/counter/counter';

describe('Counter', () => {

it('renders the initial count', () => {

render(<Counter />);

expect(screen.getByText('0')).toBeInTheDocument();

});

it('increments when button is clicked', async () => {

render(<Counter />);

await userEvent.click(screen.getByRole('button', { name: /increment/i }));

expect(screen.getByText('1')).toBeInTheDocument();

});

});Unit tests run fast and catch component-level bugs early. They’re ideal for testing:

- Component rendering logic

- User interactions within a component

- Props and state handling

End-to-End Testing with the inspector Fixture

E2E tests validate your ChatGPT App running in the inspector. Run them with:

pnpm test:e2eCreate tests in tests/e2e/ with the .spec.ts extension:

import { test, expect } from 'sunpeak/test';

test('counter increments in fullscreen mode', async ({ inspector }) => {

const result = await inspector.renderTool('show-counter', undefined, {

displayMode: 'fullscreen',

theme: 'dark',

});

const app = result.app();

await app.locator('button:has-text("increment")').click();

await expect(app.locator('text=1')).toBeVisible();

});The inspector fixture handles inspector navigation, double-iframe traversal, and host selection. The inspector.renderTool() method accepts:

- First arg - Tool name (matches your tool file in

src/tools/) - Second arg - Tool arguments (usually

{}when using simulation data) - Third arg - Display options:

theme,displayMode,prodResources

result.app() returns a Playwright FrameLocator scoped to your resource component.

Tests automatically run against both ChatGPT and Claude hosts via Playwright projects. You don’t need to loop over hosts manually. When a test fails on Claude but passes on ChatGPT (or vice versa), the test name tells you which host had the issue.

sunpeak also provides MCP-native assertion matchers:

toBeError()- Assert that a tool call returned an errortoHaveTextContent()- Assert text content in the tool resulttoHaveStructuredContent()- Assert structured content in the tool result

Creating Simulation Files

Simulation files define deterministic states for testing. Create them in tests/simulations/:

{

"tool": "show_counter",

"userMessage": "Show me a counter starting at 5",

"toolInput": {

"arguments": { "initialCount": 5 }

},

"toolResult": {

"content": [{ "type": "text", "text": "Counter displayed" }],

"structuredContent": {

"count": 5

}

}

}This simulation:

- References the

toolfile to mock by name (matchessrc/tools/show_counter.ts) - Shows

userMessagein the inspector chat interface - Sets

toolInputwith mock input accessible viauseToolData() - Provides

toolResultwith mock output data passed to your component viauseToolData()

Use simulations to test specific states without manual setup:

import { test, expect } from 'sunpeak/test';

test('counter shows initial value of 5', async ({ inspector }) => {

const result = await inspector.renderTool('show-counter');

const app = result.app();

await expect(app.locator('text=5')).toBeVisible();

});Testing Across Display Modes

ChatGPT Apps appear in three display modes. Test all of them:

import { test, expect } from 'sunpeak/test';

const displayModes = ['inline', 'pip', 'fullscreen'] as const;

for (const displayMode of displayModes) {

test(`renders correctly in ${displayMode} mode`, async ({ inspector }) => {

const result = await inspector.renderTool('show-counter', undefined, { displayMode });

const app = result.app();

await expect(app.locator('button')).toBeVisible();

});

}Each mode has different constraints:

- Inline - Embedded in chat

- Picture-in-picture - Floating window

- Fullscreen - Maximum space, modal overlay

Your app should adapt gracefully to each.

Testing Theme Adaptation

Test both light and dark themes:

import { test, expect } from 'sunpeak/test';

test('adapts to dark theme', async ({ inspector }) => {

const result = await inspector.renderTool('show-counter', undefined, { theme: 'dark' });

const app = result.app();

// Verify dark theme styles are applied

const button = app.locator('button');

await expect(button).toHaveCSS('background-color', 'rgb(255, 184, 0)');

});Testing Across Hosts

sunpeak’s testing framework runs each test against both ChatGPT and Claude hosts automatically. The defineConfig() from sunpeak/test/config sets up Playwright projects for each host.

You don’t need to loop over hosts in your test code. Write your test once:

import { test, expect } from 'sunpeak/test';

test('counter renders correctly', async ({ inspector }) => {

const result = await inspector.renderTool('show-counter', undefined, {

displayMode: 'fullscreen',

theme: 'dark',

});

const app = result.app();

await expect(app.locator('button:has-text("increment")')).toBeVisible();

});This test runs twice, once against ChatGPT and once against Claude. If it fails on one host but passes on the other, the test report shows which host had the problem.

For full coverage across themes and display modes:

import { test, expect } from 'sunpeak/test';

const themes = ['light', 'dark'] as const;

const displayModes = ['inline', 'pip', 'fullscreen'] as const;

for (const theme of themes) {

for (const displayMode of displayModes) {

test(`renders in ${theme} / ${displayMode}`, async ({ inspector }) => {

const result = await inspector.renderTool('show-counter', undefined, { theme, displayMode });

const app = result.app();

await expect(app.locator('button')).toBeVisible();

});

}

}That’s 12 test cases (2 hosts x 2 themes x 3 display modes) from a few lines of code. Each runs against the local inspector in seconds, with no network requests, no paid accounts, and no AI credits.

These same tests run on your CI/CD runners. A GitHub Actions workflow doesn’t need ChatGPT Plus credentials or Claude API keys. The inspector is self-contained.

Visual Regression Testing

Visual regression tests catch unintended UI changes by comparing screenshots against baseline images. Run them with:

pnpm test:visualThis runs your e2e tests and adds screenshot comparison on top. The first run generates baseline screenshots. Subsequent runs compare against those baselines and fail if any pixels differ beyond the threshold.

Visual tests are useful for catching:

- CSS changes that break layout across hosts

- Theme rendering differences between ChatGPT and Claude

- Display mode transitions that shift elements unexpectedly

Because pnpm test:visual includes e2e tests, you can combine it with unit tests by running both commands. For example, pnpm test:unit && pnpm test:visual runs unit tests, e2e tests, and visual regression tests.

To update baselines after intentional UI changes, delete the old screenshots in test-results/ and re-run pnpm test:visual to generate new ones.

Multi-Model Evals

All the tests above validate UI rendering. But what about tool calling? A tool description that GPT-4o interprets well might confuse Gemini. Evals test whether different LLMs call your tools correctly.

Evals connect to your MCP server, discover tools via MCP protocol, send prompts to multiple models (GPT-4o, Claude, Gemini, etc.), and assert that each model calls the right tools with the right arguments. Each eval case runs multiple times per model to measure reliability across non-deterministic LLM responses.

Run them with:

pnpm test:evalEvals are not included in the default pnpm test run because they cost money (API credits). You opt in explicitly.

Writing an Eval

Create eval specs in tests/evals/*.eval.ts:

import { expect } from 'vitest';

import { defineEval } from 'sunpeak/eval';

export default defineEval({

cases: [

{

name: 'asks for photo albums',

prompt: 'Show me my photo albums',

expect: { tool: 'show-albums' },

},

{

name: 'asks for food photos',

prompt: 'Show me photos from my Austin pizza tour',

expect: {

tool: 'show-albums',

args: { search: expect.stringMatching(/pizza|austin/i) },

},

},

],

});Configure which models to test in tests/evals/eval.config.ts:

import { defineEvalConfig } from 'sunpeak/eval';

export default defineEvalConfig({

models: ['gpt-4o', 'claude-sonnet-4-20250514', 'gemini-2.0-flash'],

defaults: {

runs: 10,

temperature: 0,

},

});Eval Output

Each case runs N times per model. The reporter shows pass/fail counts:

tests/evals/albums.eval.ts

asks for photo albums

gpt-4o 10/10 passed (100%) avg 1.2s

claude-sonnet 9/10 passed (90%) avg 0.8s

gemini-flash 6/10 passed (60%) avg 0.9s

└ failures: called 'get-photos' instead of 'show-albums' (4x)

Summary: 25/30 passed (83%) across 3 modelsEvals are scaffolded automatically by npx sunpeak new and npx sunpeak test init. API keys go in tests/evals/.env (gitignored). See the evals documentation for the full eval reference.

Running Tests in CI/CD

Add testing to your GitHub Actions workflow:

name: Test

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v5

- uses: pnpm/action-setup@v4

with:

version: 10

- uses: actions/setup-node@v4

with:

node-version: '20'

cache: 'pnpm'

- run: pnpm install

- run: pnpm exec playwright install chromium --with-deps

- run: pnpm testpnpm test runs both unit and e2e tests. The testing framework automatically:

- Runs unit tests with Vitest

- Starts the sunpeak dev server

- Runs e2e tests against both the ChatGPT and Claude hosts in the inspector

- Shuts down when complete

No API keys, paid subscriptions, or AI credits are needed on your CI runners. The inspector is entirely self-contained. Your team gets automated cross-host regression testing on every push without any external dependencies.

To add visual regression tests to your CI pipeline, use pnpm test:visual instead. This runs e2e tests and compares screenshots against baseline images, catching unintended UI changes across hosts, themes, and display modes.

Debugging Failing Tests

When tests fail, use these debugging techniques:

Playwright Debug Mode

pnpm test:e2e -- --uiOpens a visual debugger where you can:

- Step through tests

- Inspect the DOM at each step

- See screenshots and traces

Vitest Verbose Output

pnpm test:unit --reporter=verboseShows detailed output including:

- Individual assertion results

- Component render output

- Error stack traces

Screenshot on Failure

Playwright automatically captures screenshots on failure. Find them in test-results/.

Testing Best Practices

One assertion per test. Keep tests focused and easy to debug:

import { test, expect } from 'sunpeak/test';

// Good: focused test

test('increment button is visible', async ({ inspector }) => {

const result = await inspector.renderTool('show-counter');

const app = result.app();

await expect(app.locator('button:has-text("increment")')).toBeVisible();

});

// Avoid: multiple unrelated assertions

test('counter works', async ({ inspector }) => {

// Too many things being tested at once

});Test behavior, not implementation. Focus on what users see:

// Good: tests user-visible behavior

await expect(app.locator('text=5')).toBeVisible();

// Avoid: tests implementation details

await expect(component.state.count).toBe(5);Use descriptive test names. Make failures self-explanatory:

// Good: clear failure message

test('displays error message when API call fails', ...)

// Avoid: vague description

test('handles error', ...)Clean up between tests. Reset state to avoid test pollution:

afterEach(async () => {

// Reset any global state

});Get Started

npx sunpeak new

Further Reading

Frequently Asked Questions

How do I test a ChatGPT App locally without a paid ChatGPT account?

Use sunpeak, the ChatGPT App framework. Run "pnpm dev" to start a local inspector at localhost:3000 that ships both a ChatGPT host and a Claude host. You can test all display modes, themes, tool invocations, and host-specific rendering without any paid subscription or burning AI credits.

What testing frameworks work with ChatGPT Apps?

sunpeak includes a built-in testing framework. Run "pnpm test" to execute both unit and e2e tests. Use pnpm test:unit or pnpm test:e2e to run them separately. E2E tests use the inspector fixture from sunpeak/test, which calls tools, renders them in simulated hosts, and gives you a frame locator for assertions. Add pnpm test:visual for visual regression tests. Unit tests use Vitest under the hood.

How do I run ChatGPT App tests in CI/CD pipelines?

sunpeak projects include testing infrastructure ready for CI/CD. Add "pnpm test" to your pipeline to run both unit and e2e tests. Tests automatically start the dev server, run against both the ChatGPT and Claude hosts in the inspector, and shut down when complete. No paid accounts, API keys, or AI credits needed on your CI runners.

What are simulation files in ChatGPT App testing?

Simulation files are JSON files in tests/simulations/ that define deterministic UI states for testing. They specify a tool name (referencing a tool file), toolInput (mock input), toolResult (mock output), and a userMessage. The sunpeak framework auto-discovers any *.json file in the simulations directory.

Can I test different ChatGPT App display modes with sunpeak?

Yes. The inspector fixture from sunpeak/test accepts display mode options. Call inspector.renderTool("tool-name", undefined, { displayMode: "fullscreen" }) to test fullscreen, pip, or inline modes. Tests automatically run against both ChatGPT and Claude hosts.

How do I test my MCP App on both ChatGPT and Claude without paid accounts?

pnpm test runs both unit and e2e tests against both ChatGPT and Claude hosts automatically via Playwright projects. You do not need to loop over hosts manually. Each e2e test runs once per host, and failures tell you which host had the issue. All tests run locally and in CI with zero external dependencies.

How much does it cost to test a ChatGPT App against the real ChatGPT?

Manual testing against real ChatGPT requires a ChatGPT Plus subscription ($20/month per team member) and burns AI credits on every test. During active development, you might test dozens of times a day. sunpeak eliminates this cost entirely. The local inspector replicates the ChatGPT and Claude runtimes, so you test for free, locally and in CI/CD.

What is the difference between unit tests and e2e tests for ChatGPT Apps?

Unit tests (pnpm test:unit, powered by Vitest) test individual React components in isolation using happy-dom. E2E tests (pnpm test:e2e, powered by Playwright) test the full ChatGPT App running in the sunpeak inspector, including user interactions, tool calls, and display mode transitions. Visual regression tests (pnpm test:visual) add screenshot comparison on top of e2e tests. Running pnpm test without flags runs both unit and e2e tests.

How do I debug failing ChatGPT App tests?

Run "pnpm test:e2e -- --ui" to open Playwright in debug mode with a visual interface. You can step through tests, inspect the DOM, and see screenshots at each step. For unit tests, use "pnpm test:unit --reporter=verbose" for detailed output.