How to Debug ChatGPT Apps: Troubleshooting Guide for MCP App Development (April 2026)

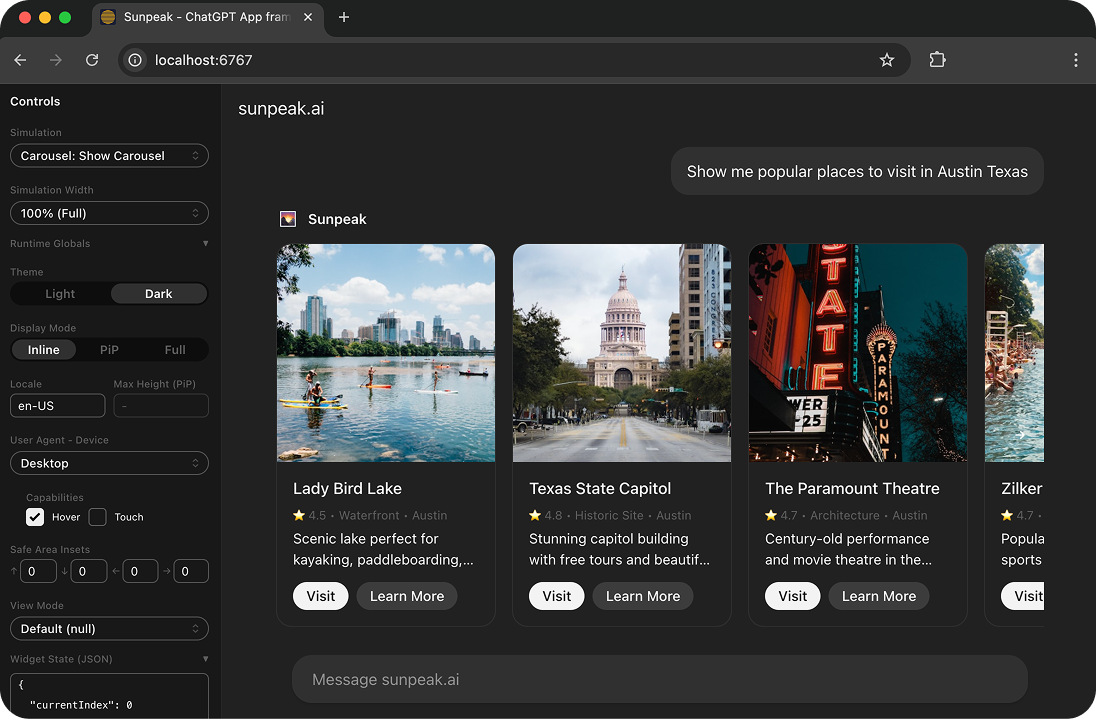

Debug ChatGPT Apps locally with the sunpeak inspector and browser DevTools.

Debugging a ChatGPT App is frustrating without the right tools. Your app works on your laptop, then breaks in ChatGPT with no stack trace. A display mode that looked fine yesterday renders off-screen today. The useToolData() output matches your logs but the UI still shows a blank card. Most of these problems come from the same handful of root causes, and once you know what to look for, they take minutes to fix instead of hours.

TL;DR: Debug in the sunpeak inspector with browser DevTools instead of in ChatGPT. Use the inspector sidebar to edit live tool values, simulation files to lock in reproducible states, and the mcp fixture from sunpeak/test to test tool output shape at the protocol level. When things break in real ChatGPT, check the resource cache and the iframe console before blaming your code.

This guide covers the bugs you will hit building ChatGPT Apps (which are MCP Apps rendered in ChatGPT) and how to fix each one.

Set Up a Local Debug Environment

The fastest way to debug a ChatGPT App is to not use ChatGPT at all. The sunpeak inspector replicates the ChatGPT runtime locally, so you get hot reload, DevTools, and deterministic tool states without redeploying on every change.

npx sunpeak new sunpeak-app

cd sunpeak-app && pnpm devYou now have:

- Inspector at

localhost:3000with ChatGPT and Claude runtimes selectable from a dropdown - MCP dev server at

localhost:8000with request logging - HMR for instant feedback on code changes

- Simulation files under

tests/simulations/for reproducible tool states

Open DevTools (F12 or Cmd+Option+I) alongside the inspector. Between the terminal, the inspector sidebar, and DevTools, you can see every value your app reads or returns.

If you already have an MCP server and want to debug it without converting to a sunpeak project, run npx sunpeak inspect --server http://localhost:8000/mcp to point the inspector at it.

Debug Tool Input and Tool Output

The useToolData hook returns the tool input (arguments the host passed) and the tool output (structuredContent from your MCP server). Most “blank card” and “wrong data” bugs trace back to something going wrong in one of these two values.

Inspect Live Values in the Sidebar

The inspector sidebar shows current toolInput, tool output, displayMode, and theme values. Edit them in place to reproduce edge cases without touching code.

If a production user reported their dashboard showed undefined, paste their tool output shape into the sidebar and watch what renders. If it breaks, you have a repro in under a minute.

Lock In a State With a Simulation File

For reproducible cases and automated tests, create a simulation file:

{

"tool": "show-dashboard",

"toolInput": {

"arguments": { "quarter": "Q1", "year": 2026 }

},

"toolResult": {

"content": [{ "type": "text", "text": "Dashboard loaded" }],

"structuredContent": {

"revenue": 125000,

"orders": 847,

"topProducts": [

{ "name": "Wireless Headphones", "unitsSold": 120, "revenue": 9599 }

]

}

}

}Now every time you open this simulation in the inspector, useToolData() returns the same values. Drop one in for each edge case: loading, empty data, partial data, error, huge data set.

Test the Output Shape at the Protocol Level

The hardest “blank card” bugs happen when the tool handler returns a different shape than the resource component expects. The component reads output.items while the handler returns output.results, and nothing crashes, it just shows nothing.

Integration tests with the mcp fixture catch these before they ship. See the integration testing guide for the full pattern:

import { test, expect } from 'sunpeak/test';

test('show-dashboard returns the shape the component reads', async ({ mcp }) => {

const result = await mcp.callTool('show-dashboard', {

quarter: 'Q1',

year: 2026,

});

expect(result.isError).toBeFalsy();

const data = result.structuredContent;

// Exact fields the DashboardResource component reads via useToolData().output

expect(data).toHaveProperty('revenue');

expect(data).toHaveProperty('orders');

expect(data).toHaveProperty('topProducts');

expect(Array.isArray(data.topProducts)).toBe(true);

});This test runs in seconds, works in CI, and fails loudly when someone renames a field on one side but not the other.

Debug Display Mode Issues

ChatGPT Apps render in three display modes, each with different constraints:

inlineis embedded in the chat flow with a narrow fixed height.pipis a floating window that users can resize or dock.fullscreenis a modal overlay covering the viewport.

Code that looks fine in fullscreen often breaks in inline, because the available height shrinks by 10x. Check the display mode reference for the full set of constraints.

Use useDisplayMode to branch on the current mode:

import { useDisplayMode } from 'sunpeak';

export function Dashboard() {

const displayMode = useDisplayMode();

return (

<div className={displayMode === 'fullscreen' ? 'p-8' : 'p-2'}>

{/* Adapt layout to mode */}

</div>

);

}Test all modes systematically with the inspector fixture:

import { test, expect } from 'sunpeak/test';

const modes = ['inline', 'pip', 'fullscreen'] as const;

for (const displayMode of modes) {

test(`dashboard renders in ${displayMode}`, async ({ inspector }) => {

const result = await inspector.renderTool('show-dashboard', undefined, { displayMode });

const app = result.app();

await expect(app.locator('h1')).toBeVisible();

await expect(app).toHaveScreenshot(`dashboard-${displayMode}.png`);

});

}Screenshots catch layout drift that visibility checks miss. Run pnpm test:visual to diff against the baseline.

Common Errors and Fixes

Blank Screen

Symptom: Your app renders nothing. No error visible in the card.

Debug steps:

- Open DevTools Console. A JavaScript error during render almost always produces a stack trace. If the console is empty, check the Network tab for a failed bundle fetch.

- Check if

useToolData()output is undefined. The hook returnsundefinedon the first render before data loads, so any code that readsoutput.somethingwithout a guard will throw. - Wrap your resource in an error boundary so a partial failure does not blank the whole view. See MCP App error handling for the full pattern.

import { useToolData } from 'sunpeak';

export function Dashboard() {

const { output } = useToolData();

if (!output) {

return <div className="p-4">Loading...</div>;

}

return <div>{output.title}</div>;

}MCP Server Connection Failed

Symptom: The app will not load. The Network tab shows failed requests to /mcp.

Debug steps:

- Confirm

pnpm devis running with no terminal errors. - If you are connecting from real ChatGPT, verify your tunnel is active and the URL in ChatGPT Settings > Connectors includes the

/mcpsuffix. A trailing slash or missing path silently breaks the connection. - Look for CORS errors in DevTools. The MCP server has CORS configured out of the box, but a reverse proxy or custom middleware can break it.

# Terminal 1

pnpm dev

# Terminal 2 (if you need to test in real ChatGPT)

ngrok http 8000

# In ChatGPT Settings > Connectors, use the full URL with /mcp:

# https://abc123.ngrok.io/mcpChatGPT Shows an Old Version of Your App

Symptom: You deployed a fix, but ChatGPT keeps rendering the old UI or calling the old tool definitions.

Cause: ChatGPT caches both resource bundles (by Resource URI) and tool definitions (by Connector modal state). If the URI does not change, ChatGPT serves the cached bundle.

Fix:

- Append a timestamp or content hash to every Resource URI on every build. sunpeak does this automatically in

pnpm build. If you are hand-rolling your MCP server, add the cache-busting yourself. - Open ChatGPT Settings > Apps, find your app, and click Refresh to re-fetch tool definitions.

- Start a new chat before testing. Existing chats freeze their tool schemas at the time the app was first used, so changes to tool names or arguments will not apply retroactively.

- If the UI still does not update, remove and re-add the connector.

This is the single most common reason developers think their code is broken when it actually shipped correctly. See What I Wish I Knew About Building ChatGPT Apps for more caching gotchas.

Theme Looks Wrong

Symptom: Text is unreadable in dark mode, or the card uses a color that clashes with the host.

Fix: Use useTheme and lean on the host-provided CSS variables instead of hardcoded colors. The host updates these variables when the user toggles themes.

import { useTheme } from 'sunpeak';

export function Dashboard() {

const theme = useTheme();

return (

<div

style={{

background: 'var(--chatgpt-bg-primary, white)',

color: 'var(--chatgpt-text-primary, black)',

}}

data-theme={theme}

>

{/* Theme-aware content */}

</div>

);

}Test both themes and pair them with visual regression checks:

import { test, expect } from 'sunpeak/test';

for (const theme of ['light', 'dark'] as const) {

test(`dashboard renders in ${theme} theme`, async ({ inspector }) => {

const result = await inspector.renderTool('show-dashboard', undefined, { theme });

const app = result.app();

await expect(app).toHaveScreenshot(`dashboard-${theme}.png`);

});

}Tool Definition Mismatch

Symptom: ChatGPT calls your tool with arguments that do not match your Zod schema, or fails to call it at all.

Debug steps:

- Run

mcp.listTools()in an integration test to see the exact schema your server registers. If a required field is listed as optional (or vice versa), your Zod definition is out of sync with the MCP registration. - Check your tool description. If it is vague, the host model may not match user queries to your tool. Write descriptions that say exactly what the tool does and what it returns.

- Verify annotations.

readOnlyHintanddestructiveHintaffect how hosts surface the tool and whether it can be auto-approved.

import { test, expect } from 'sunpeak/test';

test('show-dashboard registered with correct schema', async ({ mcp }) => {

const tools = await mcp.listTools();

const tool = tools.find(t => t.name === 'show-dashboard');

expect(tool).toBeTruthy();

expect(tool.annotations?.readOnlyHint).toBe(true);

expect(tool.inputSchema.required).toContain('quarter');

});Read the MCP Server Logs

The dev server logs every tool call and error. Watching the terminal while you reproduce a bug often points you at the root cause faster than reading code.

# A successful call

[MCP] tools/call: show-dashboard { quarter: "Q1", year: 2026 }

[MCP] response: 200 OK in 42ms

# A handler error

[MCP] tools/call: show-dashboard { quarter: "Q1", year: 2026 }

[MCP] error: Cannot read property 'map' of undefined

at show-dashboard-handler.ts:23:14For production, pipe logs to a structured logger (JSON lines) so you can grep for specific tool names and error signatures.

Debug Inside Real ChatGPT

Some bugs only happen in real ChatGPT, usually because the host runtime supplies values or capabilities the inspector cannot perfectly replicate. When you have to debug in ChatGPT itself:

- Open DevTools. In the Console dropdown, switch from

topto the iframe labeledroot. That is where your app runs. If you have called the app multiple times in one conversation, each call has its own iframe, so pick the newest one. - Add strategic logging. The inspector sidebar is not available in ChatGPT, so console logs are your main visibility tool. Log the full

outputshape at render time and the arguments to any external fetch. - Check the Network tab. MCP calls show up as POST requests to your

/mcpendpoint. Failed requests, unexpected payloads, and CORS preflight errors all surface here. - React DevTools. The extension works inside ChatGPT iframes and shows component props, state, and hook values.

Ecosystem Gotchas in 2026

A few things have changed in the MCP Apps ecosystem that trip up developers porting older code:

window.openaiis a compatibility shim. New apps should use@modelcontextprotocol/ext-appswithnew App()andawait app.connect(). The sunpeak hooks wrap this for you, so most app code does not touch it directly, but if you seewindow.openaiin a tutorial written before late 2025, treat it as legacy.- Tool result token limits. ChatGPT and Claude both cap tool results (Claude at 25,000 tokens per result). Large payloads get truncated silently, which shows up as missing fields in your UI. Paginate or summarize before returning.

- Handler timeouts. Tool handlers must return within about 5 minutes. For long-running work, return a status immediately and expose a progress tool the host can call again.

- Cross-host testing. If you want your ChatGPT App to also work as a Claude Connector, test both hosts in the inspector. A layout that fits ChatGPT’s inline mode may overflow Claude’s narrower card width.

Debugging Checklist

When a ChatGPT App misbehaves, run through this in order:

- Reproduce in the local inspector first. If it works there, the bug is in the deployment, not the code.

- Open DevTools Console and look for errors.

- Check the MCP server terminal for handler errors or failed tool calls.

- Inspect the

toolInputand tool output values in the sidebar. Do they match what the component expects? - If you see a shape mismatch, write an integration test with the

mcpfixture so the bug stays fixed. - For display mode or theme bugs, capture a screenshot in each mode and diff against the baseline.

- If the bug only happens in real ChatGPT, check the resource cache, the Connector Refresh button, and the iframe console context.

- For MCP protocol errors, verify your tool registration with

mcp.listTools()and confirm annotations and schemas match the spec.

Most bugs fall into one of these steps. The ones that do not are usually in the host or the protocol itself, which are rare enough that reporting them is faster than debugging them.

Get Started

The full sunpeak debugging flow is built into npx sunpeak new. You get the inspector, simulation files, the mcp and inspector test fixtures, and visual regression out of the box. If you already have an MCP server, run npx sunpeak inspect --server URL or npx sunpeak test init --server URL to get the debugging and testing tools without changing your server code.

For the full testing story, see the complete guide to testing ChatGPT Apps and the testing framework docs. For protocol-level bugs, integration testing with the mcp fixture is the fastest way to catch contract drift before it reaches production.

Get Started

npx sunpeak new

Further Reading

- Complete guide to testing ChatGPT Apps and MCP Apps

- Integration testing for MCP Apps - the mcp fixture and protocol-level tests

- MCP App error handling - loading, error, and cancelled states

- What I Wish I Knew About Building ChatGPT Apps

- ChatGPT App display mode reference - inline, pip, and fullscreen

- Debugging Claude Connectors - the Claude-side equivalent

- How to run a ChatGPT App locally

- ChatGPT App framework

- MCP App framework

- Testing framework

- useToolData documentation

- Troubleshooting MCP Apps

Frequently Asked Questions

How do I debug a ChatGPT App locally?

Run "pnpm dev" inside a sunpeak project to start the local inspector at localhost:3000. The inspector replicates the ChatGPT runtime with DevTools access, HMR on every code change, and a sidebar that shows live tool input, tool output, display mode, and theme values. You can edit any of those values in place to reproduce a bug without redeploying. For an existing MCP server that is not a sunpeak project, run "npx sunpeak inspect --server URL" instead.

Why is my ChatGPT App not rendering in ChatGPT?

The most common causes are: the MCP server is not running or is unreachable, the tunnel URL in ChatGPT settings is missing the /mcp suffix, a CORS error is blocking the resource fetch, the tool handler is not returning structuredContent, or the resource component name does not match the tool config. Check your terminal for server errors, verify the ngrok tunnel is active, and use the sunpeak inspector to isolate whether the issue is server-side or client-side.

Why are my ChatGPT App changes not showing up after deployment?

ChatGPT aggressively caches both resource bundles and tool definitions. You must change the Resource URI on every bundle update (append a timestamp or hash) and click Refresh in the ChatGPT Connector modal. Existing chats may still have cached tool schemas, so start a new chat to see new tool names or argument changes. sunpeak cache-busts Resource URIs automatically on every build.

How do I debug ChatGPT App hooks like useToolData?

In the sunpeak inspector sidebar you can see and edit the live toolInput and tool output without restarting your app. For reproducible cases, create a simulation file under tests/simulations/ with toolInput and toolResult.structuredContent fields. The inspector loads that file automatically so useToolData() returns the same values on every run. For MCP protocol-level testing of the shape your resource expects, use the mcp fixture from sunpeak/test with callTool().

Why does my ChatGPT App look different in different display modes?

ChatGPT Apps render in inline, pip, and fullscreen modes, each with different size constraints and safe areas. Use the useDisplayMode hook to detect the current mode and adapt your layout. Test each mode with the inspector fixture from sunpeak/test by passing displayMode: "inline", "pip", or "fullscreen" to inspector.renderTool(). Add visual regression tests to catch layout drift between modes.

How do I see console logs from my ChatGPT App?

In the sunpeak inspector, open browser DevTools (F12 or Cmd+Option+I) to see console.log output from both your resource component and the MCP server terminal. In production ChatGPT, open DevTools and switch the context dropdown from "top" to the iframe labeled "root" to see logs from your app. Log strategically around useToolData reads and any external fetches so you can trace issues in production where the inspector sidebar is not available.

How do I debug theme issues in ChatGPT Apps?

Use the useTheme hook to detect light and dark mode, then base your styles on host CSS variables or the theme value. Test both themes with the inspector fixture by passing theme: "light" or theme: "dark" to inspector.renderTool(). Add visual regression tests per theme to catch contrast and readability regressions when host variables change.

Why is my ChatGPT App showing a blank screen?

Blank screens almost always mean a JavaScript error during render. Open DevTools Console and look for stack traces. Common causes: useToolData() returns undefined output before data loads and your component reads a field on it, a hook is called conditionally, a missing error boundary lets a render error bubble up, or the bundle failed to build. Add loading and error states, wrap resources in error boundaries, and run "pnpm build" to confirm the production bundle compiles.