What I Wish I Knew About Building ChatGPT Apps (April 2026)

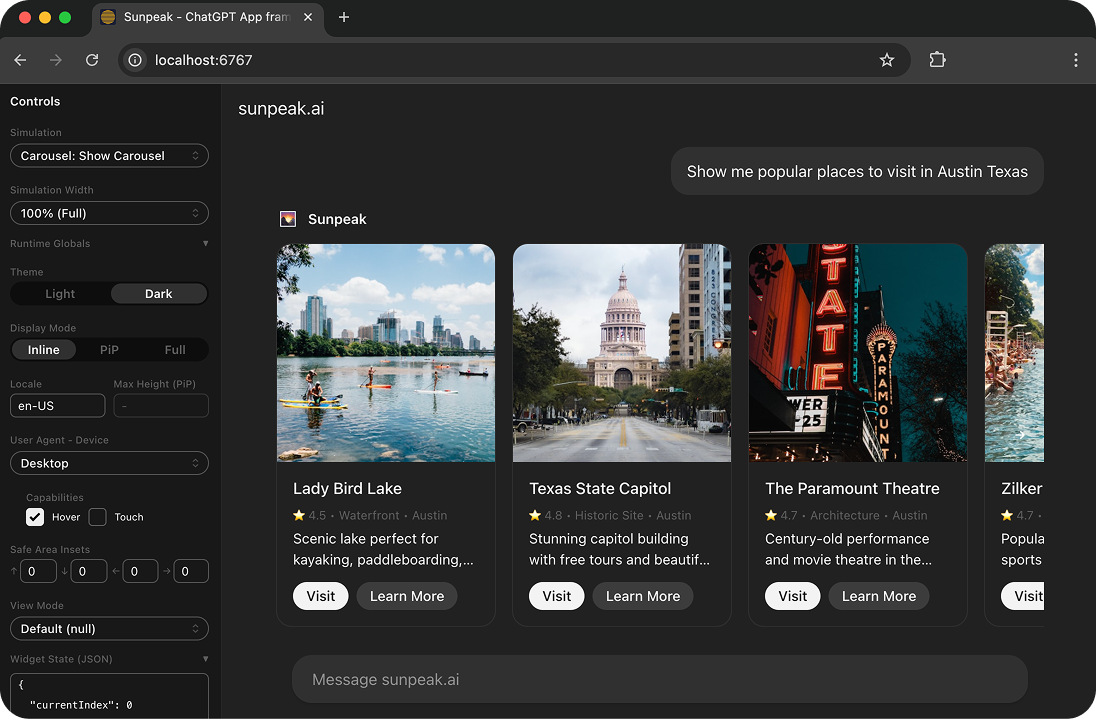

sunpeak ChatGPT App inspector running locally.

After building ChatGPT Apps since the early days and watching the platform shift to MCP Apps, I’ve collected lessons that aren’t in the official docs. Here are the four biggest things I wish someone had told me before I started.

TL;DR: Architect around MCP (Resources + Tools), use @modelcontextprotocol/ext-apps instead of window.openai for new apps, cache-bust resource URIs on every build, and know that the runtime API has more fields than what’s documented.

Lesson 1: Architect around MCP from day one

The official docs make MCP Apps sound like they merely use MCP but aren’t MCP themselves. That framing undersells it. Think of ChatGPT Apps as the GUI layer of MCP, and build your entire app around MCP concepts.

Every UI page is a Resource. Every API endpoint is a Tool. Tools reference their UI through the _meta.ui.resourceUri field. An App has one or more Resources, and a Resource has one or more Tools.

My early apps didn’t follow these boundaries, and the abstractions I built broke down in production ChatGPT. The MCP spec now has stable interfaces for this (defined in @modelcontextprotocol/ext-apps), so the structure is clearer than it was six months ago.

Lesson 2: Use the MCP Apps bridge, not window.openai

If you’re starting a new ChatGPT App in 2026, build on @modelcontextprotocol/ext-apps instead of the old window.openai global. The old SDK still works as a compatibility layer, but the MCP Apps bridge is the path forward because it works across ChatGPT, Claude, and any other host that supports the MCP Apps spec.

Here’s what changed:

Old (window.openai) | New (MCP Apps) |

|---|---|

| Implicit global | new App(); await app.connect() |

window.openai.toolInput | app.ontoolinput = (params) => {} |

callTool(name, args) | callServerTool({ name, arguments }) |

openExternal({ href }) | openLink({ url }) |

notifyIntrinsicHeight(h) | Auto-resize via ResizeObserver |

The new SDK also adds things the old one doesn’t have: ontoolinputpartial for streaming tool input, ontoolcancelled, availableDisplayModes, and autoResize. A few ChatGPT-only features like uploadFile and getFileDownloadUrl haven’t made it into the MCP Apps spec yet, so you’ll still need window.openai for those.

sunpeak’s App class wraps both the MCP Apps bridge and host-specific APIs into a single typed interface, so you can use everything from one place without worrying about which layer to call.

Lesson 3: Invalidate all the caches

When deploying your ChatGPT App, it can be hard to tell if your Resource changes have been picked up. ChatGPT aggressively caches MCP resources, so to see your latest version you have to update the Resource URI on your MCP server and click “Refresh” in the ChatGPT Connector modal on every change.

I set up my project to append a base-32 timestamp to Resource URIs on every build so they always cache-bust on the ChatGPT side. Even then, I still have to refresh the connection on every UI change. sunpeak handles this automatically, but if you’re doing it by hand, make sure your build step touches the URI.

Lesson 4: The runtime API is bigger than the docs say

The official OpenAI documentation lists only about two-thirds of the actual runtime API. I can’t guarantee these undocumented fields will stay forever, but they’re real and some of them are useful. Here’s the complete global runtime list I queried from a live app in ChatGPT:

callCompletion: (...i) => {…}

callTool: (...i) => {…}

displayMode: "inline"

downloadFile: (...i) => {…}

locale: "en-US"

maxHeight: undefined

notifyEscapeKey: (...i) => {…}

notifyIntrinsicHeight: (...i) => {…}

notifyNavigation: (...i) => {…}

notifySecurityPolicyViolation: (...i) => {…}

openExternal: (...i) => {…}

openPromptInput: (...i) => {…}

requestCheckout: (...i) => {…}

requestClose: (...i) => {…}

requestDisplayMode: (...i) => {…}

requestLinkToConnector: (...i) => {…}

requestModal: (...i) => {…}

safeArea: { insets: { … } }

sendFollowUpMessage: (...i) => {…}

sendInstrument: (...i) => {…}

setWidgetState: u => {…}

streamCompletion: (...l) => {…}

subjectId: "v1/…"

theme: "dark"

toolInput: {}

toolOutput: { text: 'Rendered Show a simple counter tool!' }

toolResponseMetadata: null

updateWidgetState: (...i) => {…}

uploadFile: (...i) => {…}

userAgent: { device: { … }, capabilities: { … } }

view: { params: null, mode: 'inline' }

widget: { state: { … }, props: { … }, setState: ƒ }

widgetState: { count: 0 }Be careful with the OpenAI example apps. They don’t handle all of these globals, documented or not, and they haven’t been updated to use the MCP Apps bridge.

The safest way to work with both documented and undocumented APIs is through sunpeak’s typed React hooks, which abstract over host differences and give you TypeScript types for everything, including fields that aren’t in the official docs. You can test all of these APIs locally with npx sunpeak inspect and the MCP App inspector without needing a paid ChatGPT account or burning host credits.

Get Started

npx sunpeak new

Further Reading

- How to Build a ChatGPT App - full tutorial from setup to deployment

- Complete Guide to Testing ChatGPT Apps - unit, e2e, and visual testing

- ChatGPT App Display Mode Reference - inline, compact, and full display modes

- How to Build a ChatGPT App Without a Paid Account

- Build an MCP App for ChatGPT and Claude - cross-platform guide

- MCP Concepts Explained - Resources, Tools, and the protocol

- Interactive ChatGPT inspector

- ChatGPT App framework

- MCP Apps documentation

Frequently Asked Questions

What is the relationship between ChatGPT Apps and MCP Apps?

ChatGPT Apps are MCP Apps built on the Model Context Protocol. The MCP extension @modelcontextprotocol/ext-apps defines the standard for interactive UIs inside AI hosts. Every UI page is an MCP Resource, and every API endpoint is an MCP Tool. ChatGPT, Claude, and other hosts all render MCP Apps the same way: sandboxed iframes with JSON-RPC over postMessage.

Should I use window.openai or @modelcontextprotocol/ext-apps for new ChatGPT Apps?

Use @modelcontextprotocol/ext-apps for new apps. The old window.openai global is now a compatibility layer. The MCP Apps bridge gives you explicit lifecycle control with new App() and await app.connect(), works across ChatGPT and Claude, and adds features like ontoolinputpartial and autoResize that the old SDK does not have.

Why are my ChatGPT App changes not showing up after deployment?

ChatGPT aggressively caches MCP resources. You must update the Resource URI on your MCP server AND click "Refresh" in the ChatGPT Connector modal for every change. sunpeak handles cache-busting automatically by appending timestamps to Resource URIs on every build.

What is the correct architecture for a ChatGPT App?

Structure your ChatGPT App according to MCP concepts: an App contains one or more Resources (UI pages), and each Resource can have one or more Tools (API actions). Tools reference their UI via the _meta.ui.resourceUri field. This architecture aligns with how ChatGPT and Claude expect to interact with your app.

What undocumented ChatGPT App runtime APIs are available?

Undocumented APIs include callCompletion, streamCompletion, downloadFile, uploadFile, notifyEscapeKey, requestModal, requestCheckout, openExternal, sendFollowUpMessage, and more. These may change without notice but enable features beyond the official documentation. sunpeak exposes many of these through its typed React hooks.

How do I test ChatGPT Apps locally without a paid account?

Use sunpeak to test ChatGPT Apps locally. Run pnpm dev to start a local MCP dev server with hot module replacement, then use npx sunpeak inspect to open a replica of the ChatGPT and Claude runtimes in your browser. No paid accounts, no host credits, no manual refresh cycles.

What changed in the MCP Apps migration from window.openai?

The main changes are: window.openai (implicit global) becomes new App() with explicit connect(), toolInput becomes an ontoolinput callback, callTool becomes callServerTool, openExternal becomes openLink, and notifyIntrinsicHeight is replaced by automatic ResizeObserver. Features like uploadFile and getFileDownloadUrl are still ChatGPT-only and not yet in the MCP Apps spec.

Can I build one ChatGPT App that also works in Claude?

Yes. MCP Apps are host-agnostic by design. If you build on the @modelcontextprotocol/ext-apps standard, your app runs in any host that supports MCP Apps, including ChatGPT and Claude. sunpeak lets you build, test, and deploy across both from a single codebase.